Agents

Agents orchestrate LLM calls, tool executions, conditional logic, and data transformations into repeatable pipelines. almyty supports two agent modes: Workflow agents defined as visual DAGs, and Autonomous agents configured with instructions, tools, and memory.

Agent Modes

| Mode | Best for | How it works |

|---|---|---|

| Workflow | Deterministic multi-step pipelines | Visual DAG builder with 10 node types connected by edges |

| Autonomous | Open-ended reasoning tasks | Form-based config: system instructions, attached tools, memory, and optional collaboration with other agents |

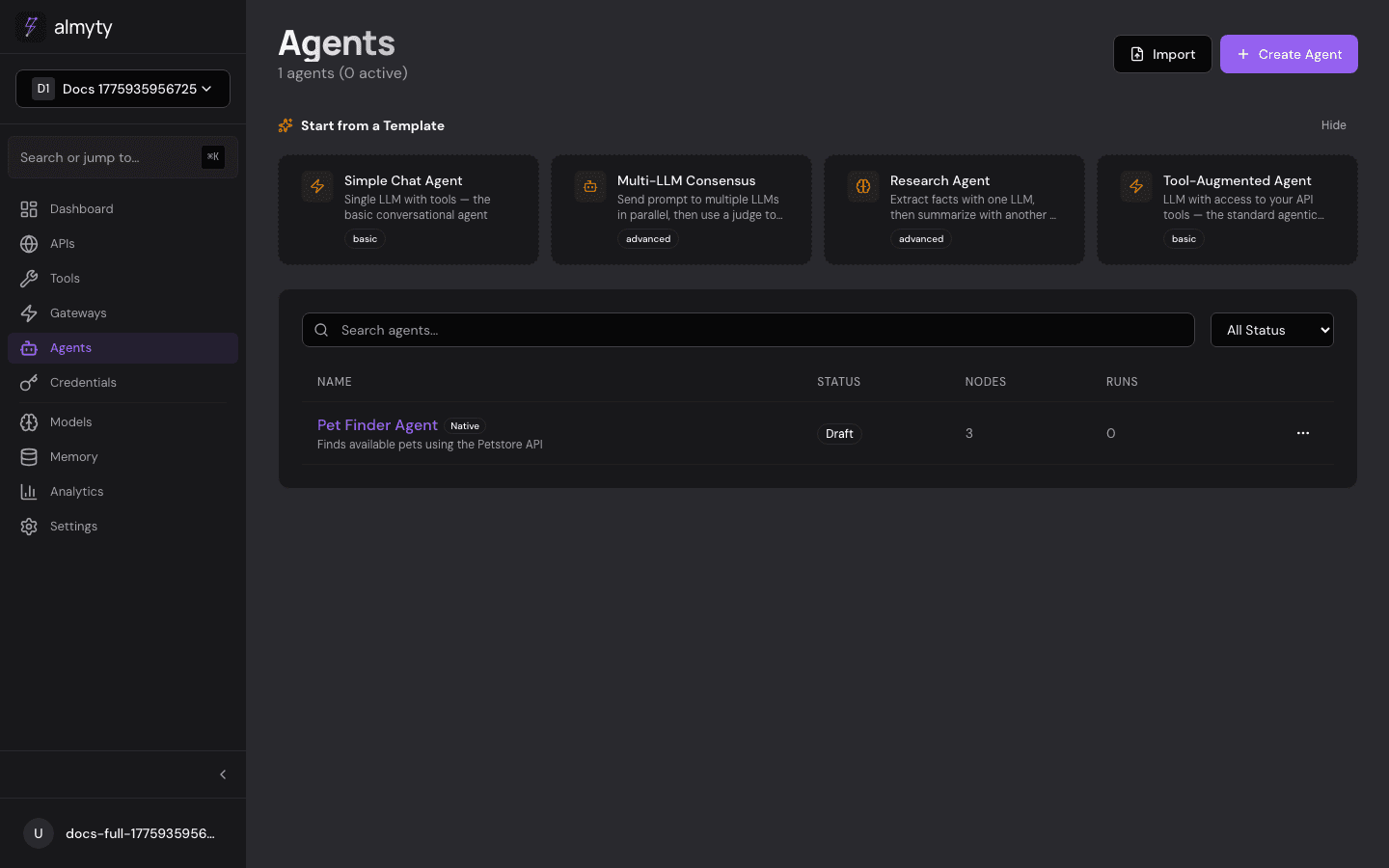

In the UI

Creating a Workflow Agent

- Click Agents in the sidebar

- Click Create Agent

- Select the Workflow tab

- Enter a name and optional description

- The canvas opens with a default Input, LLM Call, and Output pipeline

- Drag nodes from the left palette, connect them, and configure each node in the right panel

- Click Save to store the agent as a draft

Creating an Autonomous Agent

- Click Agents in the sidebar

- Click Create Agent

- Select the Autonomous tab

- Enter a name and system instructions describing the agent’s behavior

- Attach tools the agent can call

- Optionally enable memory and configure collaboration with other agents

- Click Save

Managing Agents

From the agents list you can:

- Activate / Deactivate an agent using the status toggle

- Duplicate an agent to create a copy

- Import an agent from JSON using the Import button in the top bar

- Delete an agent from its detail page

Via the API

Create a Workflow Agent

curl -X POST /agents \

-H "Authorization: Bearer $TOKEN" \

-H "Content-Type: application/json" \

-d '{

"name": "Research Agent",

"description": "Searches and summarizes information",

"mode": "workflow",

"pipeline": {

"nodes": [

{ "id": "input_1", "type": "input", "data": {

"schema": { "type": "object", "properties": { "query": { "type": "string" } }, "required": ["query"] }

}},

{ "id": "llm_1", "type": "llm_call", "data": {

"providerId": "provider-uuid",

"systemPrompt": "You are a research assistant.",

"userPromptTemplate": "Research: {{input.query}}"

}},

{ "id": "output_1", "type": "output", "data": {

"mapping": "{{nodes.llm_1.output}}"

}}

],

"edges": [

{ "id": "e1", "source": "input_1", "target": "llm_1" },

{ "id": "e2", "source": "llm_1", "target": "output_1" }

]

}

}'Create an Autonomous Agent

curl -X POST /agents \

-H "Authorization: Bearer $TOKEN" \

-H "Content-Type: application/json" \

-d '{

"name": "Support Agent",

"mode": "autonomous",

"instructions": "You are a customer support agent. Answer questions using the knowledge base tool.",

"toolIds": ["tool-uuid-1", "tool-uuid-2"],

"memoryEnabled": true

}'Invoke an Agent

curl -X POST /agents/{id}/invoke \

-H "Authorization: Bearer $TOKEN" \

-H "Content-Type: application/json" \

-d '{

"input": { "query": "What is the weather in Berlin?" }

}'Response:

{

"success": true,

"data": {

"output": "The current weather in Berlin is 12C with partly cloudy skies.",

"executionId": "exec-uuid",

"duration": 2340,

"tokensUsed": 156

}

}Import and Export

# Export

curl /agents/{id}/export \

-H "Authorization: Bearer $TOKEN" > agent.json

# Import

curl -X POST /agents/import \

-H "Authorization: Bearer $TOKEN" \

-H "Content-Type: application/json" \

-d @agent.jsonAgent Lifecycle

| Status | Description |

|---|---|

draft | Agent is being built, not yet invokable |

active | Agent is live and accepting invocations |

inactive | Agent is paused, invocations will be rejected |

error | Agent has a configuration issue |

Execution Model

When a workflow agent is invoked, almyty:

- Validates the input against the Input node’s schema

- Topologically sorts the pipeline nodes

- Executes each node in dependency order, passing data along edges

- Resolves template expressions (

{{nodes.llm_1.output}}) at runtime - Returns the Output node’s result

When an autonomous agent is invoked, almyty sends the input to the LLM with the configured instructions and tools, letting the model decide which tools to call and how to compose the final response.

Templates

almyty ships with built-in templates to get started quickly:

| Template | Description |

|---|---|

| Simple Chat | Input, LLM, Output — the simplest possible agent |

| Research Agent | Chains multiple LLM calls with web search tools |

| Tool-Augmented | LLM with tool calling for dynamic API interaction |

| Multi-Step Pipeline | Conditional branching with data transformations |

Select a template when creating an agent to get a pre-wired pipeline.