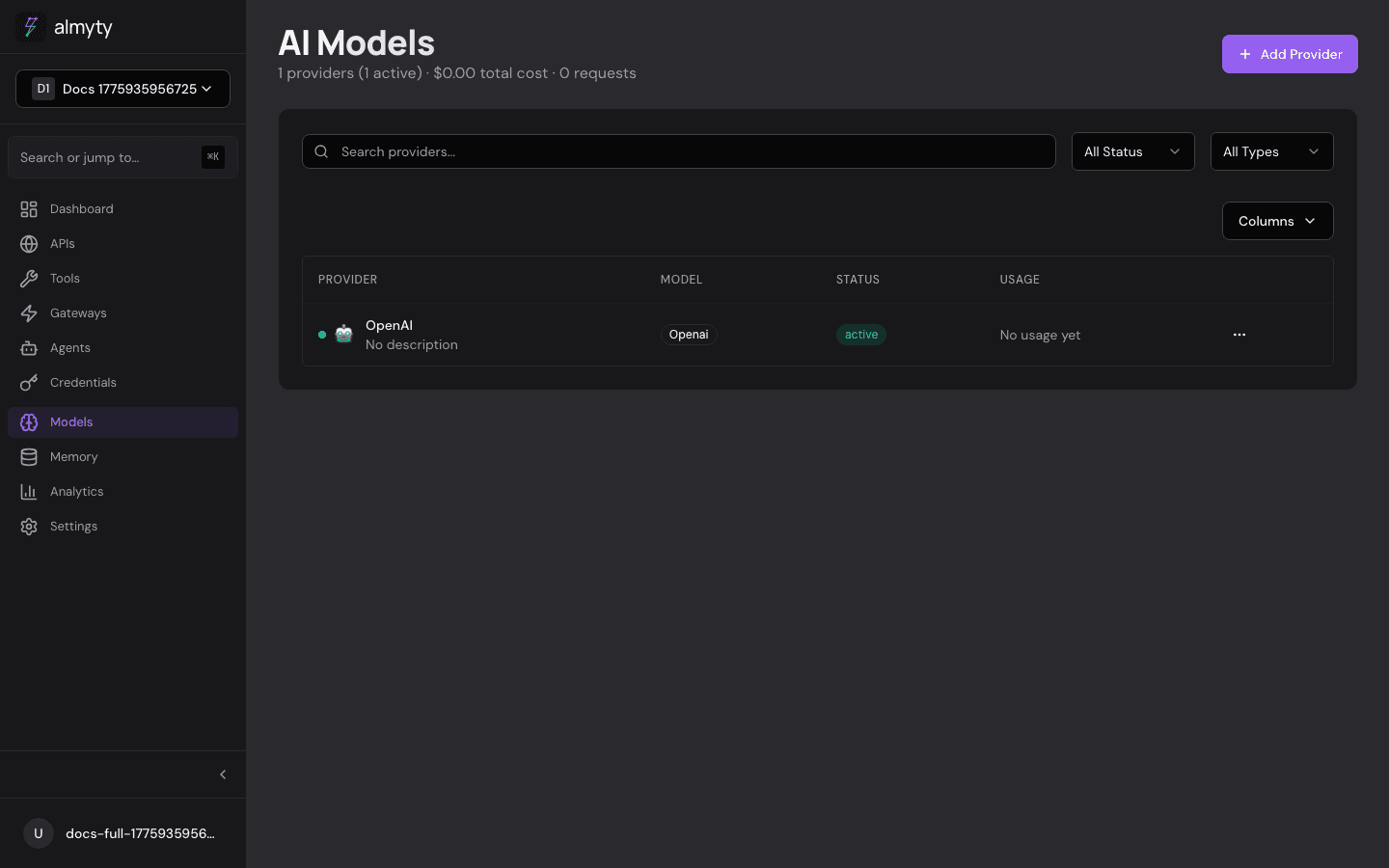

AI Models

AI Models connect language model providers to your agent pipelines and LLM-powered tools. almyty supports 14 providers out of the box and any OpenAI-compatible endpoint.

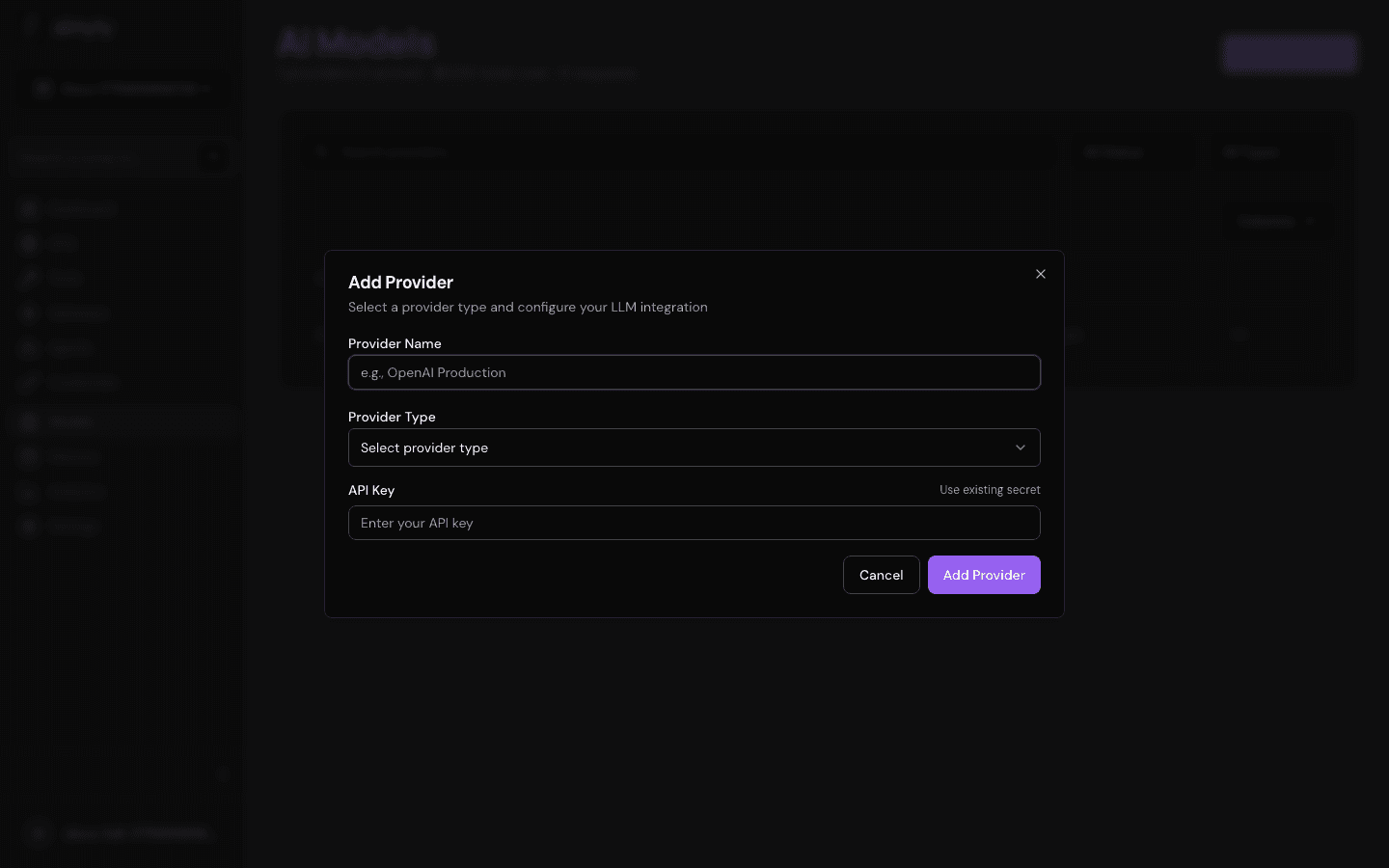

In the UI

- Navigate to Models in the sidebar

- Click Add Provider

- Select the provider type

- Enter a display name and your API key

- Choose a default model from the dropdown

- Optionally adjust temperature, max tokens, and top-p

- Click Test Connection — almyty sends a lightweight request to verify the key and model

- Click Save

The provider now appears in the Models list and is available in agent pipeline LLM Call nodes and LLM-type tools.

Via the API

Add a provider

curl -X POST /llm-providers \

-H "Authorization: Bearer $TOKEN" \

-H "Content-Type: application/json" \

-d '{

"name": "OpenAI Production",

"provider": "openai",

"apiKey": "sk-...",

"model": "gpt-4o"

}'Test connection

curl -X POST /llm-providers/{id}/test \

-H "Authorization: Bearer $TOKEN"Returns the model name, response time, and status.

Chat

curl -X POST /llm-providers/{id}/chat \

-H "Authorization: Bearer $TOKEN" \

-H "Content-Type: application/json" \

-d '{

"message": "What can you help me with?",

"sessionId": "session-uuid"

}'Sessions are stored server-side and maintain full message history.

Update a provider

curl -X PATCH /llm-providers/{id} \

-H "Authorization: Bearer $TOKEN" \

-H "Content-Type: application/json" \

-d '{

"model": "gpt-4o-mini",

"configuration": { "temperature": 0.5 }

}'Delete a provider

curl -X DELETE /llm-providers/{id} \

-H "Authorization: Bearer $TOKEN"Supported providers

| Provider | Type | Notes |

|---|---|---|

| OpenAI | openai | GPT-4o, GPT-4o-mini, o1, o3, etc. |

| Anthropic | anthropic | Claude Opus, Sonnet, Haiku |

| Google Gemini | openai | Via OpenAI-compatible endpoint |

| Azure OpenAI | openai | Set baseUrl to your Azure deployment |

| AWS Bedrock | openai | Via OpenAI-compatible proxy |

| Google Vertex | openai | Via OpenAI-compatible proxy |

| Mistral | openai | Set baseUrl to https://api.mistral.ai/v1 |

| Cohere | openai | Via OpenAI-compatible endpoint |

| Groq | openai | Set baseUrl to https://api.groq.com/openai/v1 |

| Together | openai | Set baseUrl to https://api.together.xyz/v1 |

| Perplexity | openai | Set baseUrl to https://api.perplexity.ai |

| DeepSeek | openai | Set baseUrl to https://api.deepseek.com/v1 |

| Ollama | openai | Set baseUrl to http://localhost:11434/v1 |

| Custom | openai | Any OpenAI-compatible HTTP endpoint |

For providers listed as type openai, set the baseUrl field to point at the provider’s OpenAI-compatible endpoint. The apiKey field accepts whatever token the provider expects.

Configuration reference

| Parameter | Type | Default | Description |

|---|---|---|---|

name | string | — | Display name |

provider | string | — | openai or anthropic |

apiKey | string | — | Provider API key (encrypted at rest) |

model | string | — | Default model identifier |

baseUrl | string | https://api.openai.com/v1 | Override for compatible APIs |

temperature | number | 0.7 | Sampling temperature (0-2) |

maxTokens | number | 4096 | Maximum response tokens |

topP | number | 1.0 | Nucleus sampling |

Cost tracking

almyty tracks token usage and estimated cost for every LLM call. View aggregate costs per provider in the Analytics dashboard or on the provider detail page.

Usage in agents

When building an agent pipeline, LLM Call nodes reference a provider by ID. The provider’s default model and parameters are used unless overridden in the node configuration. See Agents for details.